|

6/12/2023 0 Comments Svm e0171 hyperplanSee the confusion matrix result of prediction, using command table to compare the result of SVM prediction and the class data in y variable. System.time(predict(svm_model_after_tune,x)) Run Prediction again with new model pred <- predict(svm_model_after_tune,x) , data = iris, kernel = "radial", cost = 1, , data=iris, kernel="radial", cost=1, gamma=0.5) # - sampling method: 10-fold cross validationĪfter you find the best cost and gamma, you can create svm model again and try to run again svm_model_after_tune <- svm(Species ~. svm_tune <- tune(svm, train.x=x, train.y=y, TUning SVM to find the best cost and gamma. System.time(pred <- predict(svm_model1,x))

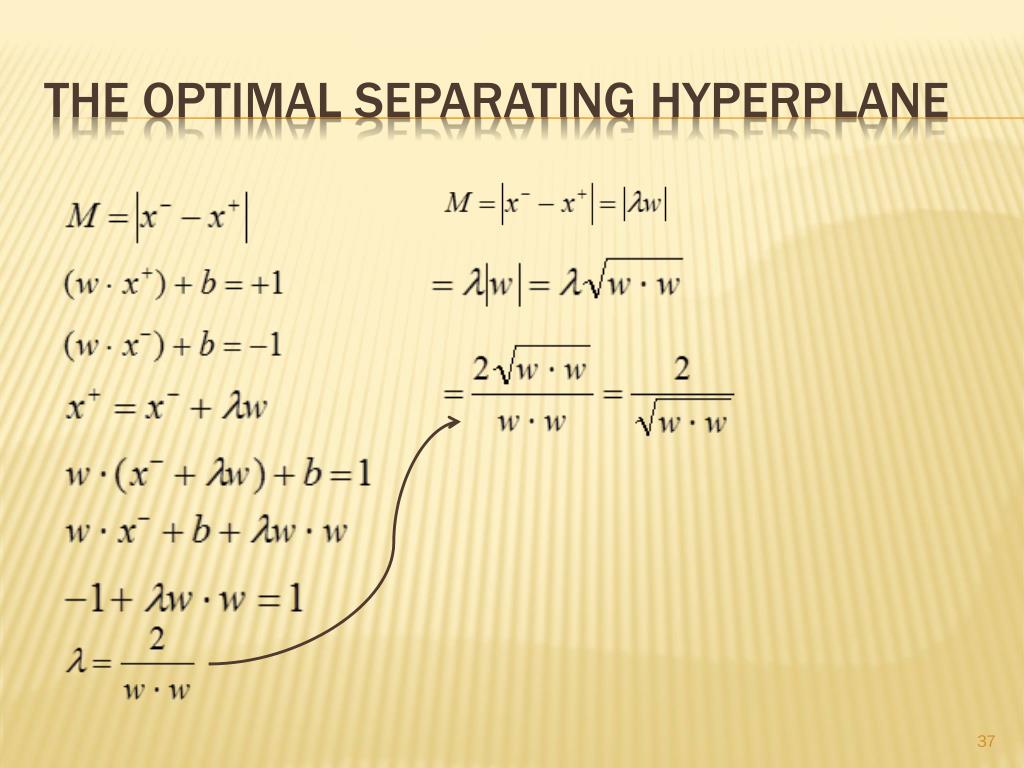

Run Prediction and you can measuring the execution time in R pred <- predict(svm_model1,x) , data = iris)Ĭreate SVM Model and show summary svm_model1 <- svm(x,y) # Sepal.Length Sepal.Width Petal.Length Petal.Width Speciesĭivide Iris data to x (containt the all features) and y only the classes x <- subset(iris, select=-Species)Ĭreate SVM Model and show summary svm_model <- svm(Species ~. Use library e1071, you can install it using install.packages(“e1071”). Surfacecolor=np.zeros(zz.SVM example with Iris Data in R SVM example with Iris Data in R I was able to reproduce the sample code in 2-dimensions found here. Go.Surface(x=xx, y=yy, z=zz, opacity=.5, showscale=False, Learn more about svm, hyperplane, binary classifier, 3d plottng MATLAB Hello, I am trying to figure out how to plot the resulting decision boundary from fitcsvm using 3 predictors. If a kernel function ( u, v) ( u), ( v) is used, w typically can no longer be expressed in input space, but only in the space spanned by the embedding function. Setting: We define a linear classifier: h(x) sign(wTx + b. 1) How to plot the data points in vector space (Sample diagram for the given test data will help me best) 2) How to calculate hyperplane using the given sample. For a linear SVM, the separating hyperplane's normal vector w can be written in input space, and we get: f ( z) w, z + w T z +, with the model's bias term. Hello, I am trying to figure out how to plot the resulting decision boundary from fitcsvm using 3 predictors. The SVM finds the maximum margin separating hyperplane. Learn more about svm, hyperplane, binary classifier, 3d plottng MATLAB. The prediction function f ( z) for an SVM model is exactly the signed distance of z to the separating hyperplane. The Perceptron guaranteed that you find a hyperplane if it exists. SVM SVC(kernel'poly', C100, gamma0.0001) You can see a more detailed tutorial about how to tune Support Vector Machines by optimizing hyperparameters through the link below: Tuning Support Vector Machines 2- Training SVC We have the model, we have the training data now we can start training the model. Marker=dict(color=y, size=2.5, line=dict(color="black", width=1))), The Support Vector Machine (SVM) is a linear classifier that can be viewed as an extension of the Perceptron developed by Rosenblatt in 1958. Then, using plotly for visualization: go.Figure(, y=X, z=X, mode="markers", showlegend=False, To express the hyperplane as a function of the z coordinate (the third feature) create a mesh grid over the x and y coordinates (the first two features): w = ef_ The hyperplane is defined as ax+by+cz+d=0 where x,y,z are the three features, a,b,c the fitted coefficients of the model and d the fitted intercept.

So suppose you fitted an SVM classifier as follows, where X is an array with 3 columns (features) and y has the same length as X and represents the classes: model = SVC(kernel="linear").fit(X, y) Any1 knows some smart codes for my purpose? How can I achieve a hyperlane in the other 3 graphs? (see picture) I want to visualize a stylzed hyperplanes for the 4 graphs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed